ESP32: first hands on MAX7219 and WiFi

For an investment of 80-90 minutes time I am quite happy about the result and amazed what sohisticated capabilites are offered at some really easily accessible level.

What has been achieved so far?

* setting up a working Arduino Studio 1.8.5-environment with ESP32-toolchain on a Win7-laptop (not my favorite, but Linux-PC was blocked)

* setting proper options to the IDE to make the examples compile and upload via serial to the board; learn how to use the integrated serial-monitor

* finding the proper PIN-setup for the MAX7219-LED-display (just one module – for now) and setting up the library

* playing around with the WiFi-functionality (scanning for networks)

* putting both tasks (network scan and led-output) together and running into the first “I need threads”-pit

[Video was taken at an early stage: a static string is displayed.]

Of course, THIS is nothing, just the very first tiny baby-step. Everyone is able to achieve this, because almost no creativity needs to be invested.

But: configuring all the necessary tools was already a pain in the ass. Of course, just read the manual(s) [..]

But this is not a “unwrap and press start”-toy, so the learning-curve was steeper than expected.

But I did it. And I look forward. Especially to put some threaded application on the MCU, because – why just run one thread, when you can have two? 😉

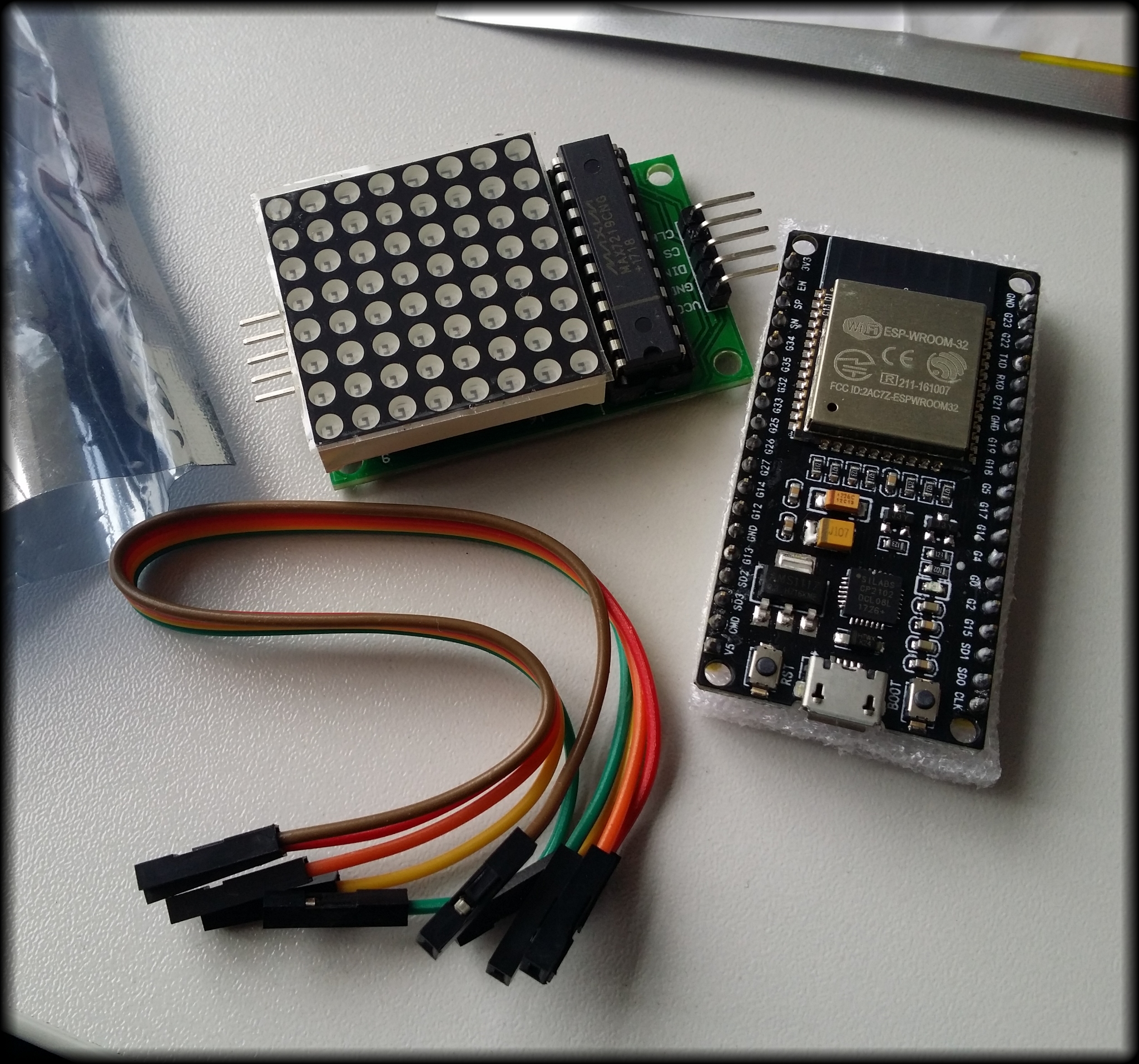

[The MAX7219 and the ESP32-dev-board from MakerHawk hours before the first test run. Battery pack not included.]

[The MAX7219 and the ESP32-dev-board from MakerHawk hours before the first test run. Battery pack not included.]

MPC: adding additional DEFINES

Some weeks ago I noticed how the qDebug()-output could be enriched, so that in bigger solutions with a lot of different “unknown” components a reported error could be immediately pinned. And you save writing always __FILE__ and __LINE__. Referres to this post.

But the problem was that with the mpc-buildsystem it was unknown to me how to force it to put this DEFINE into the vcxproj-files.

It can be done via the “macros”-statement!

So I worked on my Python-skills and wrote a short script which iterates the given path recursively and fixes all mpc-files by checking for the position of the line with the last closingbrace “}” and then it adds before that position the line. Of course, the experts know several thousand ways to improve that script – but I am currently happy with it. It works, it is debug-able (.sh, I look at you!) and I will use the skeleton also for some other tasks.

It can be found (like most Python-snippets) at: https://github.com/marcelpetrick/pythonCollection

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 |

import fileinput import sys import os #------------------------------------------ def addMacroToFile(filename, newStatement): #print("called addMacroToFile:", filename, determinePositionOfLastBrace(filename)) returnValue = "fail" posLastBrace = determinePositionOfLastBrace(filename) posCurrentLine = 1 for line in fileinput.input(filename, inplace=True): # add additional line in case of matching last brace if posCurrentLine == posLastBrace: sys.stdout.write(newStatement) sys.stdout.write("\n") # newline, else it would be put just before the brace returnValue = "success" sys.stdout.write(line) # prints without additional newline (on windows) posCurrentLine += 1 return returnValue #------------------------------------------ # return the position of the last brace. # possible improvement: in case of some malformed mpc: skip .. def determinePositionOfLastBrace(filename): lastPos = -1 position = 1 with open(filename, "r") as file: #has implicit close - which is nice for line in file: if line.__contains__("}"): lastPos = position position += 1 return lastPos # ------------------------------------------ # find all mpb/mpc files def fixAllFilesRecursively(path, suffix, newStatement): for dirname, dirnames, filenames in os.walk(path): for filename in filenames: full_path = os.path.join(dirname, filename) if full_path.endswith(suffix): print(full_path) wasSuccessful = addMacroToFile(full_path, newStatement) if wasSuccessful == "success": print(" modified :)") # prevent recursion into those subdirs with meta-data if '.svn' in dirnames: dirnames.remove('.svn') if '.git' in dirnames: dirnames.remove('.git') #------------------------------------------ #------------------------------------------ path = "D:\Repo_INS_RADARNX" suffix = "mpc" newStatement = " macros += QT_MESSAGELOGCONTEXT" # TODO possible improvement: prevent double application of the added macro .. fixAllFilesRecursively(path, suffix, newStatement) |

Preventing the crash of the performance-profiler from Visual Studio (2013-2017) due to Meltdown-/Spectre-patches

I needed some analytical help from Visual Studio (due to the fact that MTuner and AQTimer could not work properly with our suite). So, I build my solution, fire up the “Performance Profiling” in VS2015 and *zump* computer reboots.

Discussions and investigations led to the thesis that some Windows-patches are the culprit, because they prevent that previously used hooks are usable.

So, setting those two lines in an admin-enabled cmd.exe (plus reboot) lead to alleviation:

|

1 2 |

reg add "HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\Session Manager\Memory Management" /v FeatureSettingsOverride /t REG_DWORD /d 3 /f reg add "HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\Session Manager\Memory Management" /v FeatureSettingsOverrideMask /t REG_DWORD /d 3 /f |

Python-advanced level-seminar: lifelong learning

I am currently traveling back from my very first paid educational leave. Proper selection, arrangement and preparation lead to some awesome impressions: about the capabilities of Python and about the city of Detmold.

Daniel Warner lead us – an assembly of five inquisitive men in the age-range from 30 to 60 – along the details and

specialties of that programming language. I learned much, in detail:

- basic structures; list comprehension

- classes; objects; overrides; imports; representation; init-method

- dictionaries for caching results (memoisation)

- decorators (nice for for printing, caching and thread-safety)

- descriptors, properties and slots, kwargs

- (multi-)inheritance and its quirks

- recursive functions; functional programming

- threads, synchronisation, atomic access

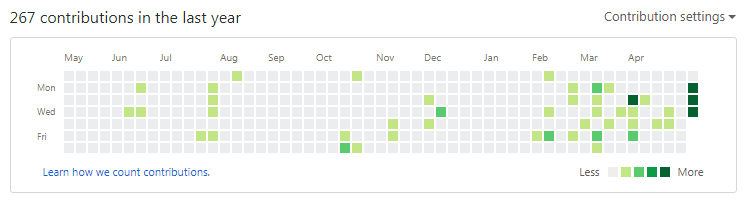

I put all the exercises (full script with my own annotations) into a Git-repository right from the beginning and published it: https://github.com/marcelpetrick/Python_FortgeschrittenenSeminar/.

Which also makes a nice view of the github-history 🙂

Python was chosen by me by intent: I see and plan for ways to use it with artifical intelligence (TensorFlow-binding ..); microcontroller-programming (ESP can run MicroPython) and for the Raspberry (currently the tumblr-upload-script for the catcam is also Python); for daily data-manipulation-tasks which are currently done more or less on Bash or AutoIt or Batch – and then: write it once, run it both on Linux and Win).

This was a great choice! And I want to thank my wife for supporting these stays absent from home and my plan to achieve the wanted education 🙂 And I got a small certificate – but that’s just icing on the cake.

My plan as first real exercise is to re-implement the “find all islands in the given map”-programming challenge. This will be fun. Getting to know some specialties and what properties/slots mean in Python-context (compared to the Qt-ones) was nice. And the decorators are a really powerful way to add special functionality to methods without bloating them and without blocking the view to the busines logic.

business card: put everything technically possible in the balance

Some weeks ago I thought that it would be nice to have some business cards and then I started to think about the data I want to share, the design and what could underline my claim to be above-average?

A paper-card with all data: standard.

Adding QR-codes to lead the user to my homepage: nice.

Adding another, bigger QR-code to the back to give him all the aforementioned data plus address: better.

Putting a NFC NTAG216-sticker on the back which delivers on reading ALL information with the slightest effort: my level!

And yes, I think you noticed my pride. I am pleased with the result 🙂

hint: created the QR-codes with the help of QR-monkey – well designed and comfortable to use

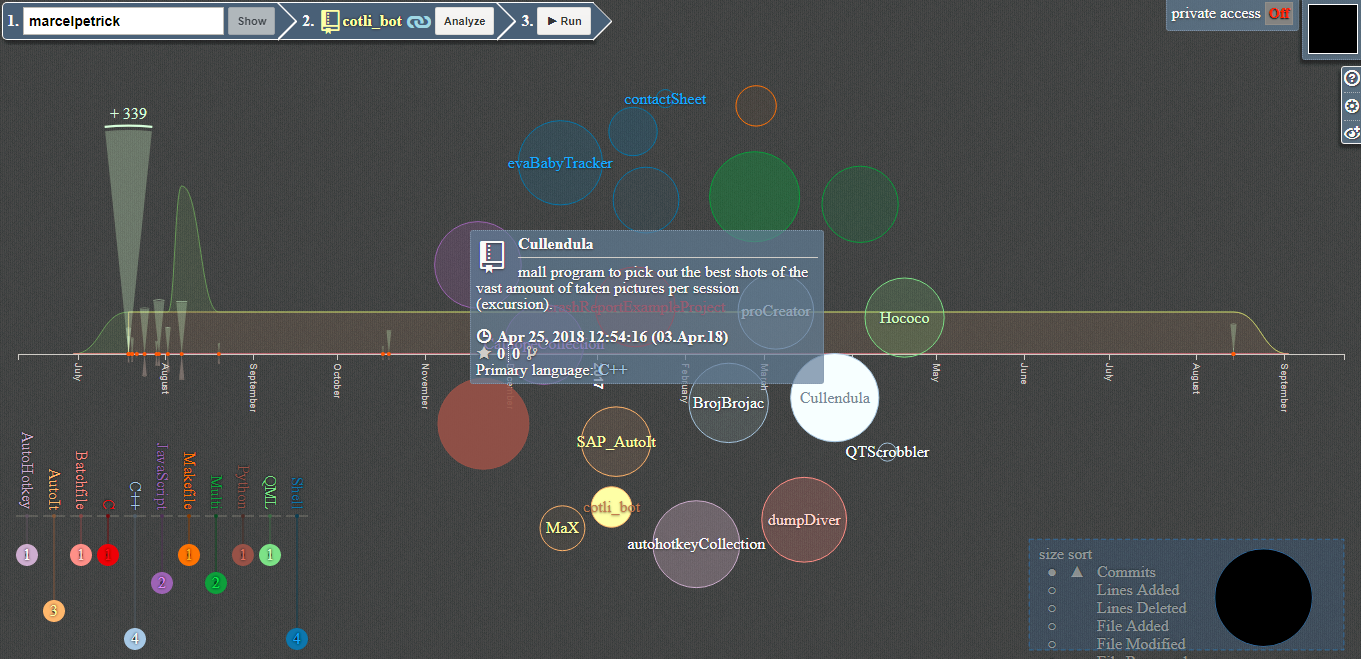

visualise your github <3

Will create a nice overview of your projects, the amount of commits and the used languages. I like it 🙂

http://ghv.artzub.com/#user=marcelpetrick

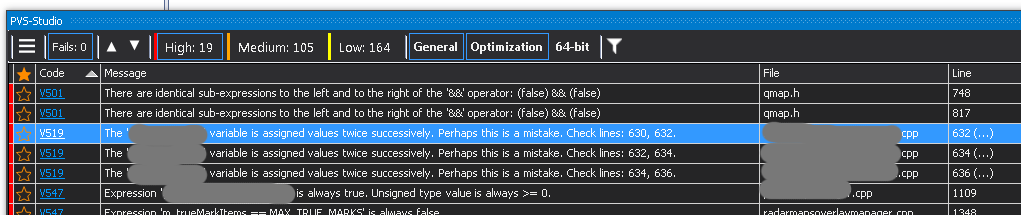

static code analysis: PVS Studio

I am using a new static code analysis-tool for some weeks now and it turns out quite handy: PVS Studio.

It integrates quite well into Visual Studio and you can run the analysis of projects or the whole solution. Doing this can take a while, because all includes are analyzed as well – which is nice. For a single developer a trial-license is for evaluation enough – if you can refrain from the really nice “jump to the culprit”-functionality.

It looks like it detects more errors than cppcheck (another tool which I use now for years on several platforms) and without doubt: none of the reported lines were false positives!

Of course, there is always the discussion with colleagues if those tools help. But I will repeat it again: why not buy & apply them and get a lot of troubleshooting for zero investment of creativity and time!

The screenshot hints out (for instance) that some breaks are missing in the switch-case – and yes, the resulting symptoms were already reported as bug in JIRA.

ESP32: the future is now!

Ok, the first drafts of the ESP32 (successor of the ESP8266) as presoldered boards (mostly called development-board) appeared 2016, so acquiring something like this in the current year is nothing special. BUT: I’ve had my hands on an ESP8266-board (nodeMCU v3 – if I remember correctly) and I was astonished! Integrated wifi on such a tiny board and then even micro-USB for flashing, wow. For someone who played with the MSP430-chips from Texas Instruments for his bachelor thesis, this is finally something affordable. “Smaller” than the RPi, but I see alot of potential for gathering and preparing data and the final distribution of information.

So, today I ordered from China (why buy from local or european shops, when you can save 60% if you have time?):

- 2 x ESP32S (240 MHz DualCore from Tensilica, 4 MiByte integrated flash memory, Wifi, Bluetooth)

- 2 x DHT22 temperature and humidity-sensor

- 2 x MAX7219 modules (8×8 LED)

- 2 x MAX7219 modules with 4 blocks in line (32×8 if you want to call it that way)

- 2 x 0.96″ OLED displays RGB(!) and 128×64 pixel

Alltogether for less than 40 €, which is really crazy. Let us hope everything arrives well and in the next five weeks and then the tinkering can start 🙂 Have two “weather sensor-stations” in mind. Maybe a Raspberry as sink for the data. Maybe some Android-app via Blynk. Let us see…

I have the skill(s) – give me the hardware! 🙂

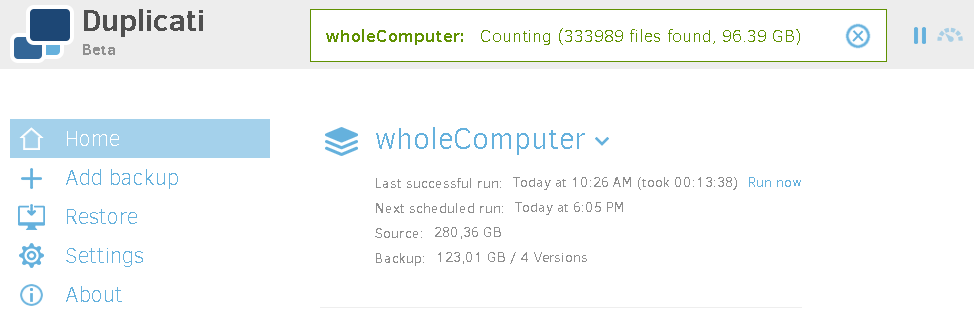

backup often, backup early: Duplicati

I wanted an open source-solution which allows to backup locally and remote certain directories (or whole PC). I found some month ago duplicati and used it for good at work, where it currently backups the content of the whole SSD-partitions to some interal hard disk (not the most secure backup, I know. But given to the constraints still better than no backup at all.)

At home the content of the two home-directories (Linux) is transferred to a shared folder on the Synology-NAS.

duplicati on wikipedia // code under GNU LGPL

I can just repeat: working without a backup is the best path to failure.

In my history as “computer technician” I have ruined several drives and especially when you just want to do a default, simple operation (*cough* move a partion on a hard drive *cough*), everything fails and there is no way to revert back to the original state. Never again 🙂