0xDEADBEEF

Today I handed in my first #ProductProposal 🥳

And it was quite fun to write it.

Since pitching products is not my day-to-day business I spent some time to research how to write such a proposal. My first search results were aimed at “elevator pitches” or “startup pitches”. So I had to dig deeper ..

Anyway: due to confidentiality I can’t show-case anything here. I can only share this: I drew some #mockups with draw.io, put in the technical features and spent the majority of the time on writing a teaser. Something which will catch the audience immediately and makes them think: wow, we really have to sponsor this project!

Oh, why do I say “we”? #datamodul opened an innovation contest to source the ideas of their employees. Quite a clever idea. And a team can achieve so much more than a collection of individuals.

So .. even if that proposal is a dud, I learned some things, especially about writing a proper introduction. My first iteration was quite technical 😅

# Lessons learned:

* How to do a proper pitch, reduce technical details, appeal to a #vision, create a pitch deck with slides

* But also: go fast, break things. Too much time was spent on refinement and polishing. I had set myself an arbitrary date six weeks ago and in retrospective I had already 80% of the material at that time. I’ll be better next time: #time-boxing upfront.

Coursera: project management

I’ve finished the “Foundations of Project Management” on the weekend. This is the first milestone out of six for the “Google Project Management: Professional Certificate”.

This was my first Coursera-online learning-experience and I liked it. A quite refreshing mix out of videos, quizzes and reading which kept me on track. Even finished some days earlier than anticipated by the regular four week-schedule 😅

I’ll definitely continue because my day-to-day business requires a lot of management .. and even with some hands-on experience, there is always the opportunity to improve.

The best weather app so far ;)

People love forecasts. And almost everyone I know has one or two apps on their device to check it.

But nothing beats

while :; do clear; curl wttr.in/Laim; date; sleep 60; done

‘Kein Backup, kein Mitleid.’

A common German saying meaning “no backup, no pity”. Five weeks ago when another ransomware-wave became public and I saw the disaster with the Western Digital network-drives, I realized that despite using a NAS and regularly backing up data from different devices to this RAID level 1-device, I have no real “hard” backup. In my definition: a 1:1 mirror of the NAS-content which is NOT connected to any network at all and also stored physically in a different room or even different flat. Disconnected because of: are you sure everything is secured? Also no hidden bugs? Physically distant because of: what if the NAS catches fire and the backup is also affected?

So I did a quick review of the current state (NAS is a DS213 with latest updates; offers also USB 3.0-interface; has 2 4 TB drives insides, which are filled to 3.5 TB).

Decided to buy a 6 TB external 3.5″-harddrive (not SSD, because of possible loss of data while powered down over years) for 122 € (compared to the price of losing just a fraction of the photos – Forget it!).

Formatted it to EXT4 with some Linux. Attached then to the Synology DS213. And it was not detected, despite saying it supports EXT4. So we let the DS213 format it again (don’t ask me ..).

Let’s say it outright: I don’t want to use their proprietary backup-solutions. I also don’t want any kind of encryption.

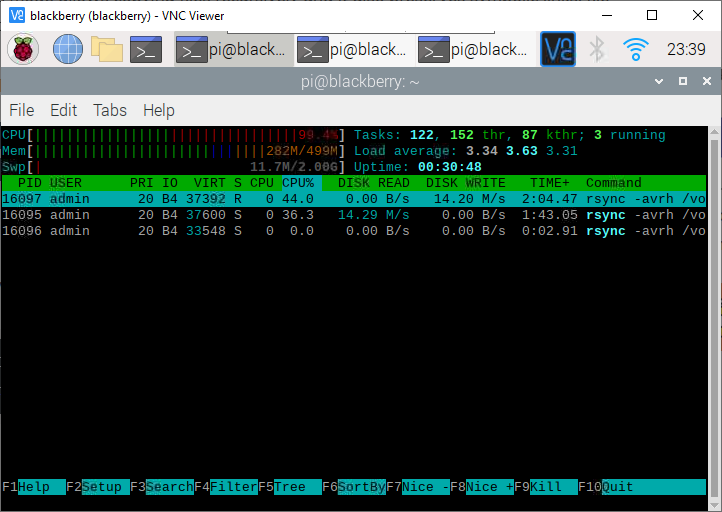

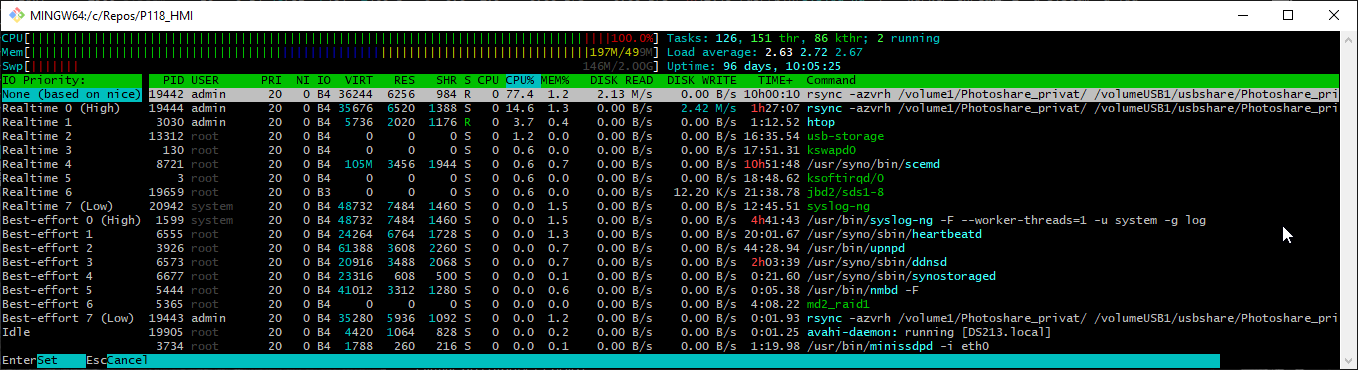

SSH’ed into the DS213 (activated before, because off by default). Then I checked which partitions have to be copied. Puzzled together a chained rsync-command (sorted by priorities) and let it run. Detaching the session via ‘nohup’ Wasn’t working.

|

1 2 3 4 5 6 7 |

rsync -avrh /volume1/Photoshare_privat/ /volumeUSB1/usbshare/Photoshare_privat/ && \ rsync -avrh /volume1/homes/Marcel/ /volumeUSB1/usbshare/homes/Marcel/ && \ rsync -avrh /volume1/homes/ruzica/ /volumeUSB1/usbshare/homes/ruzica/ && \ rsync -avrh /volume1/homes/admin/ /volumeUSB1/usbshare/homes/admin/ && \ rsync -avrh /volume1/Camera/ /volumeUSB1/usbshare/Camera/ && \ rsync -avrh /volume1/photo/ /volumeUSB1/usbshare/photo/ && \ rsync -avrh /volume1/Musik/ /volumeUSB1/usbshare/Musik/ |

Turns out quite quickly that the one core-cpu of the DS213 is the bottleneck, because it runs at 100% and therefore mere writing speeds of 8-9 MB/s are achieved despite the HDD being capable of writing up to 120 MB/s. My back of the napkin-estimation is 4-5 days for all data. Next backups should run faster, because incremental. And most of the data is written once, changed almost never.

Quick summary: I was baffled that despite having some experience in IT did not have a _real_ backup before. And out of discussions with peers I know almost none of those working in IT have either.

The proposed solution will save my family and me from any 100% losses.

edit 20210801: ideas need some time to mature. Now one of the Raspberry Pi 3B takes care of the rsync-calls to relieve the real PC (host RocketChat anyway, therefore is online 24/7) and rsync runs now without compression; the resulting writing speed is now in the range of 11-15 MiByte/s.

grip: render Markdown as PDF

.. and other things, where you assumed it should be quite easy. ..

Wrote a short guide how to verify some information in Markdown. Local rendering works (most of the time via PyCharm or online at Github).

Now: how export it as PDF, because I realized that the receiver might not be able to display it properly.

* printing from PyCharm: failed

* VisualStudio-Plugin: no VS, no plugin

* any of the *nix-ways: not possible at that moment

* using a web-renderer: not allowed, because confidental data

UFF!

Python to the rescue!

|

1 2 |

pip install grip grip file.md |

Grip prepares a local flask server, where you receive a localhost:<randomport> url and just open it with the browser of your choice and then print as PDF.

žžžžžž

Two family members names sport a nice z with Caron: ž.

Win 10: ALT+0158 (number pad with numlock on)

Linux (unicode): CTRL+SHIFT+U+017E

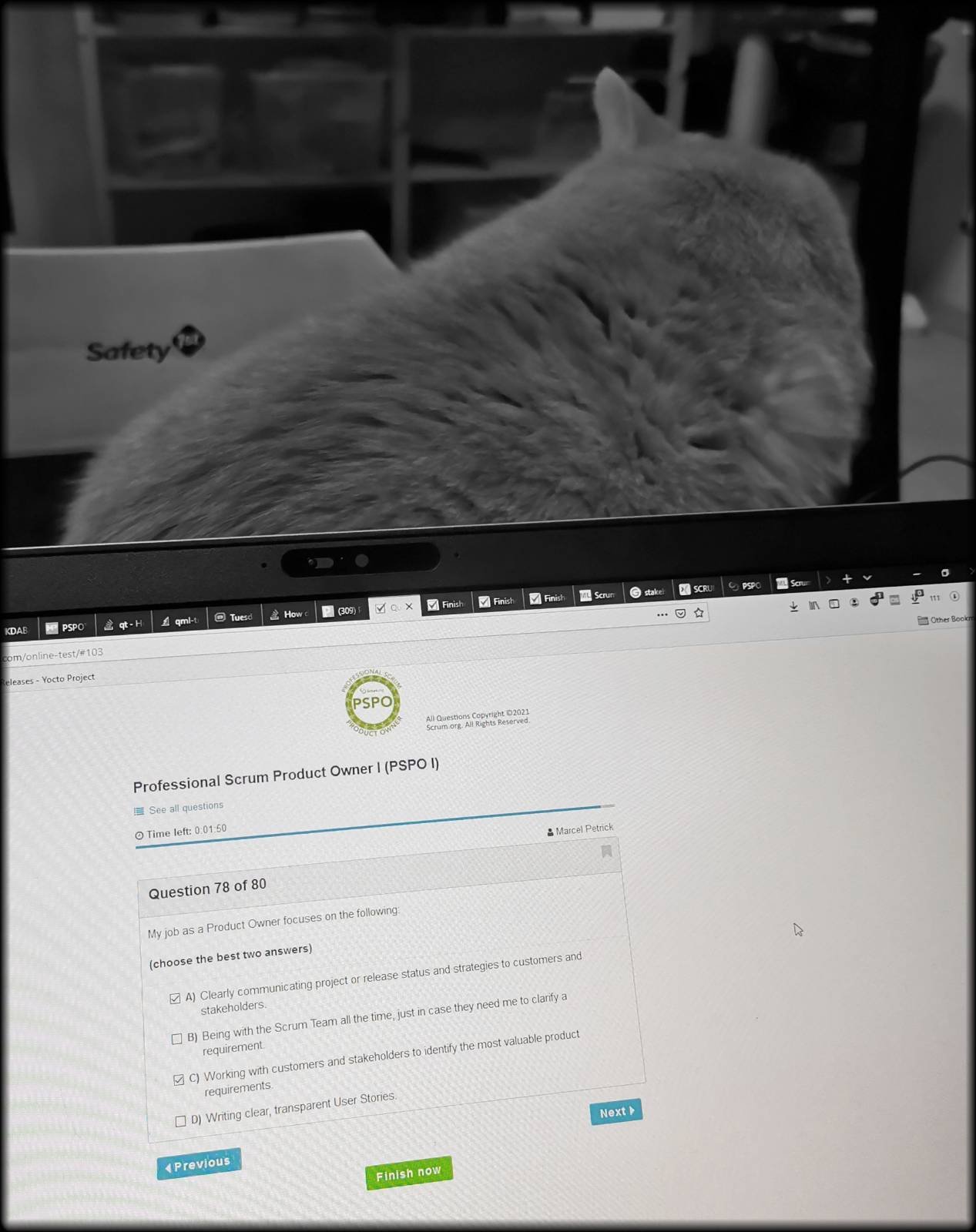

Scrum: PSPO I certification

With the power of the mega-fluff I’ve succeeded in the test and can call myself now ‘Professional Scrum Product Owner‘.

Of course, it is just a small step. But a series of small steps will carry over long distances.

Sincere thanks go to Glenn Lamming & Boris Steiner for their interactive way to teach the fundamentals 👍

Is this a bug?

Let’s assume the following situation: in the past a feature was implemented, a full DoD (code review, manual test, stakeholder approval, ..) was done and the ticket successfully closed.

Suddenly someone realizes that a second feature, which is closely related to the first one, should have that behaviour as well. The circle of people cavilling about the lack of functionality is identical with the people writing the requirements and doing the validation of the implementation of feature one.

So, is this a bug?

No, this is a new task!

It is devaluating the efforts of all people involved in first place, because the work was delivered like ordered. Nobody complained – until now.

Let me explain, because this sounds like nitpicking and fussing about the wording. But the inner problem is, when stakeholder and requirement engineers couldn’t capture all use-cases or had implicit, inner expectations and did not note them down before (or while) development, then you can’t suddenly name the lack of some additonal change a bug.

Or you can. If you want to piss of your developers :>

Another aspect: at the end of sprints or releases reviews are done. And at this very moment only numbers matter. You won’t dive into the details of each tickets – so only the binning of the types of solved issues matter. And mislabelling the aforementioned tasks as bugs can make a good-team result appear like a “barely made it over the finishing line”.

education 2020

People always talk always about shopping & happiness. Now I can understand :’) Just booked for the upcoming weeks for my education “Workshop: Deep Learning für Natural Language Processing (NLP)” && “iSAQB Certified Professional for Software Architecture – Foundation Level (CPSA-FL)” <3 #neverstoplearning

nota bene: and HSK1 for Mandarin will be tackled as well!

Covid-19, PanOffice and how my education-plan imploded

A note before the actual post: a week ago, while proofreading I’ve noticed that some of the following statements, which are meant truly neutral, could and would leave a stale aftertaste. That’s definitely not the intention; it’s more a snapshot of the current state (like for a chronicle). On the other hand: if I would censor it more, I can trash as well the whole post. Because ten thousands of texts were already written about the curent state of human society and the impact of Covid-19.

So, read it with a pinch of salt: we are lucky to be healthy and that we don’t suffer from more harsh conditions.

More than two months ago Sars-CoV-2 -induced infections scaled up in Germany and hit us without much preparation. Us includes me, my family, my workplace, society at all.

Read more…