‘Kein Backup, kein Mitleid.’

A common German saying meaning “no backup, no pity”. Five weeks ago when another ransomware-wave became public and I saw the disaster with the Western Digital network-drives, I realized that despite using a NAS and regularly backing up data from different devices to this RAID level 1-device, I have no real “hard” backup. In my definition: a 1:1 mirror of the NAS-content which is NOT connected to any network at all and also stored physically in a different room or even different flat. Disconnected because of: are you sure everything is secured? Also no hidden bugs? Physically distant because of: what if the NAS catches fire and the backup is also affected?

So I did a quick review of the current state (NAS is a DS213 with latest updates; offers also USB 3.0-interface; has 2 4 TB drives insides, which are filled to 3.5 TB).

Decided to buy a 6 TB external 3.5″-harddrive (not SSD, because of possible loss of data while powered down over years) for 122 € (compared to the price of losing just a fraction of the photos – Forget it!).

Formatted it to EXT4 with some Linux. Attached then to the Synology DS213. And it was not detected, despite saying it supports EXT4. So we let the DS213 format it again (don’t ask me ..).

Let’s say it outright: I don’t want to use their proprietary backup-solutions. I also don’t want any kind of encryption.

SSH’ed into the DS213 (activated before, because off by default). Then I checked which partitions have to be copied. Puzzled together a chained rsync-command (sorted by priorities) and let it run. Detaching the session via ‘nohup’ Wasn’t working.

|

1 2 3 4 5 6 7 |

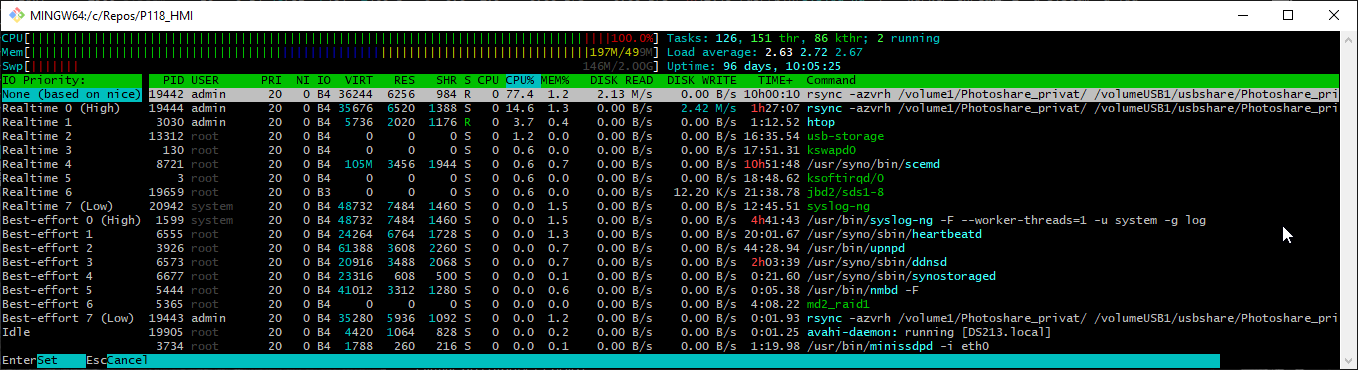

rsync -avrh /volume1/Photoshare_privat/ /volumeUSB1/usbshare/Photoshare_privat/ && \ rsync -avrh /volume1/homes/Marcel/ /volumeUSB1/usbshare/homes/Marcel/ && \ rsync -avrh /volume1/homes/ruzica/ /volumeUSB1/usbshare/homes/ruzica/ && \ rsync -avrh /volume1/homes/admin/ /volumeUSB1/usbshare/homes/admin/ && \ rsync -avrh /volume1/Camera/ /volumeUSB1/usbshare/Camera/ && \ rsync -avrh /volume1/photo/ /volumeUSB1/usbshare/photo/ && \ rsync -avrh /volume1/Musik/ /volumeUSB1/usbshare/Musik/ |

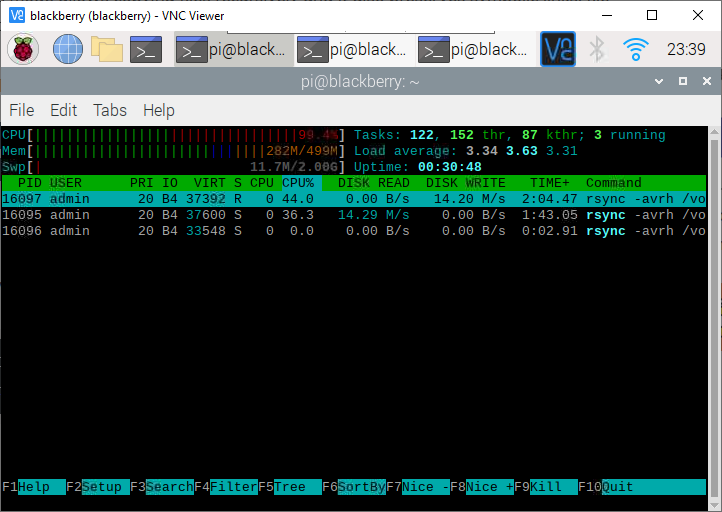

Turns out quite quickly that the one core-cpu of the DS213 is the bottleneck, because it runs at 100% and therefore mere writing speeds of 8-9 MB/s are achieved despite the HDD being capable of writing up to 120 MB/s. My back of the napkin-estimation is 4-5 days for all data. Next backups should run faster, because incremental. And most of the data is written once, changed almost never.

Quick summary: I was baffled that despite having some experience in IT did not have a _real_ backup before. And out of discussions with peers I know almost none of those working in IT have either.

The proposed solution will save my family and me from any 100% losses.

edit 20210801: ideas need some time to mature. Now one of the Raspberry Pi 3B takes care of the rsync-calls to relieve the real PC (host RocketChat anyway, therefore is online 24/7) and rsync runs now without compression; the resulting writing speed is now in the range of 11-15 MiByte/s.

grip: render Markdown as PDF

.. and other things, where you assumed it should be quite easy. ..

Wrote a short guide how to verify some information in Markdown. Local rendering works (most of the time via PyCharm or online at Github).

Now: how export it as PDF, because I realized that the receiver might not be able to display it properly.

* printing from PyCharm: failed

* VisualStudio-Plugin: no VS, no plugin

* any of the *nix-ways: not possible at that moment

* using a web-renderer: not allowed, because confidental data

UFF!

Python to the rescue!

|

1 2 |

pip install grip grip file.md |

Grip prepares a local flask server, where you receive a localhost:<randomport> url and just open it with the browser of your choice and then print as PDF.

žžžžžž

Two family members names sport a nice z with Caron: ž.

Win 10: ALT+0158 (number pad with numlock on)

Linux (unicode): CTRL+SHIFT+U+017E

Scrum: PSPO I certification

With the power of the mega-fluff I’ve succeeded in the test and can call myself now ‘Professional Scrum Product Owner‘.

Of course, it is just a small step. But a series of small steps will carry over long distances.

Sincere thanks go to Glenn Lamming & Boris Steiner for their interactive way to teach the fundamentals 👍

64 GiB USB 3.1 stick and VM problems

Got a new 64 GiB USB3.1 stick (Samsung) and that’s where the problems started..

It was formatted with exFAT, one partition, which was not mountable for an embedded Linux.

So I tried to to use Windows standard tools (neither via explorer nor via powershell) to create a FAT32-partition of smaller than 32 GiB size. Did not work.

Second thought: let’s do this inside the Kubuntu-VM!

* media was not shown -> fixable by installing the extension pack (else just USB 1.1), then adding in the VM-settings a USB3.0-filter for the respective device, then fire up the VM and activate it in the “virtualbox-bar > devices”.

* check with ‘lsblk’ if there is a new block based device

* format with ‘gparted’ if necessary: one primary partition of 20 GiB FAT32 and the rest ext4 worked like a charm for me (of course, windows can’t handle ext4 ..). Gparted is really a lifesaver, been using this for a decade now.

* mount with ‘sudo mount /dev/sbd1 ~/Desktop/usbsticky’ if OS does not support some auto-mount on plugging-in

Cheap wifi repeater

Long time ago (see: https://marcelpetrick.bplaced.net/wp_solutionsnotcode/?p=1266 ) I announced the plan to set up one/some ESP8266 as wifi-repeater and after some tinkering I did.

The device was running during Summer 2020 until now in different locations.

Code comes from martin-ger – kudos to him. Flash the binary and you’re done. Used the ESP-download-tool, as far as I can remember.

The wrapping is quite makeshift, but works. The blinking LED is a bit annoying if used on the balcony at night. will fix the hole for the cable with hot glue. Can run from battery pack or via usb-charger. Ah, yes, the price for the full package is around 3 €.

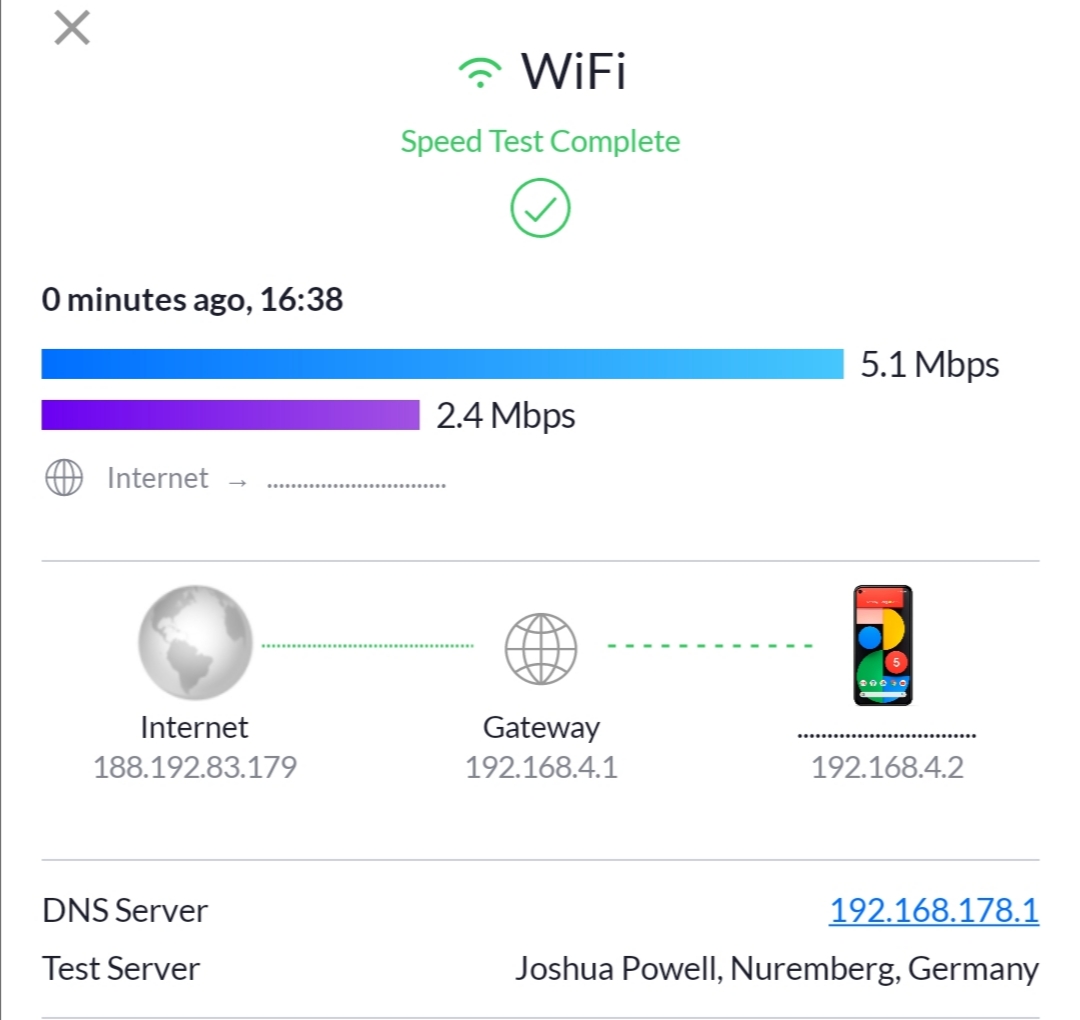

Performance: throughput is 5 Mbps down and 2 Mbps upstream. Not really much, but better than the local 4G ..

If the ESP8266 is connected via USB to serial terminal (115.200 baud), the output at boot looks like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 |

WiFi Repeater V1.2 starting Config found and loaded Starting Console TCP Server on 7777 port mode : sta(3c:71:bf:3a:a2:74) + softAP(3e:71:bf:3a:a2:74) add if0 add if1 dhcp server start:(ip:192.168.4.1,mask:255.255.255.0,gw:192.168.4.1) bcn 100 shoscandone state: 0 -> 2 (b0) STA: SSID:Katzengast24 PW: removed;) [AutoConnect:1] AP: SSID:newGreen PW: removed;) IP:192.168.4.1/24 Clock speed: 80 CMD>state: 2 -> 3 (0) state: 3 -> 5 (10) add 0 aid 1 cnt connected with Katzengast24, channel 10 dhcp client start... connect to ssid Katzengast24, channel 10 CMD> CMD> CMD> STA: SSID:Katzengast24 PW: ;) [AutoConnect:1] AP: SSID:newGreen PW: ;) IP:192.168.4.1/24 Clock speed: 80 CMD>ip:192.168.178.126,mask:255.255.255.0,gw:192.168.178.1 ip:192.168.178.126,mask:255.255.255.0,gw:192.168.178.1,dns:192.168.178.1 add 1 aid 1 station: a4:9b:4f:05:55:be join, AID = 1 station: a4:9b:4f:05:55:bejoin, AID = 1 LmacRxBlk:1 LmacRxBlk:1 LmacRxBlk:1 LmacRxBlk:1 |

Configure via serial by doing:

|

1 2 3 4 5 |

set ssid Katzengast24 set password removed;) set ap_ssid newGreen set ap_password removed;) save |

Windows (10) doesn’t allow the same resource twice

So, the NAS* I use for years is separated into different partitions. Some for general access and things and one for music, which has a special user, which owns just read-only privileges.

Linux: no problem: mount as many shares with Gigolo and you are done.

Windows: use that 90s-style network-mount of the ‘explorer’ and add share by resource and credentials.

“\\ds213\musik” .. Works, but is awkward and not comfortable.

then you want to mount the second share with different credentials and you get “Can’t mount the same share** with different credentials”.

Workaround:

mount once by resource-name and once by ip: “\\192.168.178.178\musik”

Another approach via the hosts-file.

* DS213 from Synology: 2 bay; now running 24/7 for 5 (?) years; upgraded inbetween from 2 TByte drives to 4 TB with complete replication

** is actually “different shares at the same host”, but who am I with my limited knowledge?

RamBLE and the (german) Covid19 tracker

Quicker posts, less retardation! One of the goals for 2021 was to 0. write more often, 1. faster after ‘doing’ and 2. therefore also covering more things which were ‘touched’

There’s still a pile of topics left from 2020 xD

In Novembre a colleague and me talked about one of his acquantainces which wrote an iOS-app, which can show how many “Corona Warn App”-users are nearby. I was puzzling over this and also wondered if really some special app or some bluetooth-receiver-tinkering is needed. I still own that one BT receiver for the Raspberry.

First: So, quick check for BT sniffers in the playstore showed RamBLE.

Second: 5 min of googling found the UUID used for the exposure notification: “fd6f”.

So, let’s combine and see what is shown for an location I traverse often:

Quintessence: check the market before developing something 😉

Project Euler – mathematical riddles which require some programming skills

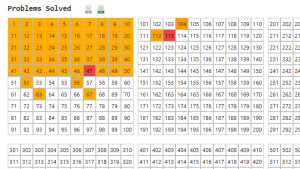

Took me a while to write about this, but I really love Project Euler. The page is a collection of math challenges, which require some programming (I saw just one which could have been computed by a closed formula without any help). The first ten are quite easy to solve and are more commonly known math problems. Prime numbers and combinatorics play a strong role. But then the difficulty rises quite quickly. Usually it takes me one to two hours to write a Python solution for one. If I would – like I should – write unit-tests for each single method and not for a few selected one, then I guess 50% more.

It’s great: each problem is a closed, separate problem, which requires some algorithmic thinking and – of course – some proper implementation. If you chose the wrong path time or space complexity will kill your ambitions quite quickly. But proper solutions are computed most of the time in less than a minute.

Most of the time I rely on basic python structures and common libraries. But I’ve also given NumPy, itertools, etc. a try. Speeds up the process quite a bit.

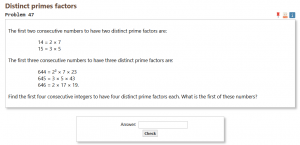

My next goal is to fix problem 47, because then I’ve handed in solutions for all of the first fifty problems.

My next goal is to fix problem 47, because then I’ve handed in solutions for all of the first fifty problems.

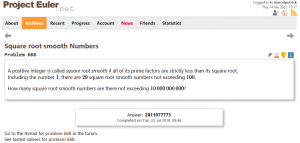

The highest challenge (with also the highest difficulty level (for me) so far) was problem 668. Due to a really big (80 GiByte!) boolean array the computer had a hard time swapping memory. So it took almost 36 hours to finish. By the way: less than 900 people worldwide have solved this issue #tinyflakeofpride

The highest challenge (with also the highest difficulty level (for me) so far) was problem 668. Due to a really big (80 GiByte!) boolean array the computer had a hard time swapping memory. So it took almost 36 hours to finish. By the way: less than 900 people worldwide have solved this issue #tinyflakeofpride

Of course, several geniuses have dedicated pages to optimal solution strategies. Which is a nice idea. But I avoid them. Most of the times stepping back, thinking without a display about the problem and if the chosen approach was a good one, is more helpful. A solution by cheating is nothing which renders any reward.

Of course, several geniuses have dedicated pages to optimal solution strategies. Which is a nice idea. But I avoid them. Most of the times stepping back, thinking without a display about the problem and if the chosen approach was a good one, is more helpful. A solution by cheating is nothing which renders any reward.

micro:bit v2 arrived :)

Today (finally) my micro:bit v2 arrived. Had to unwrap it immediately after dinner and play around with the speech synthesis👌🏻 Some lines of microPython and the things got heated.

If you’re not creating anything nowadays, then it’s your own fault 🐒